Different Levels of Visualization

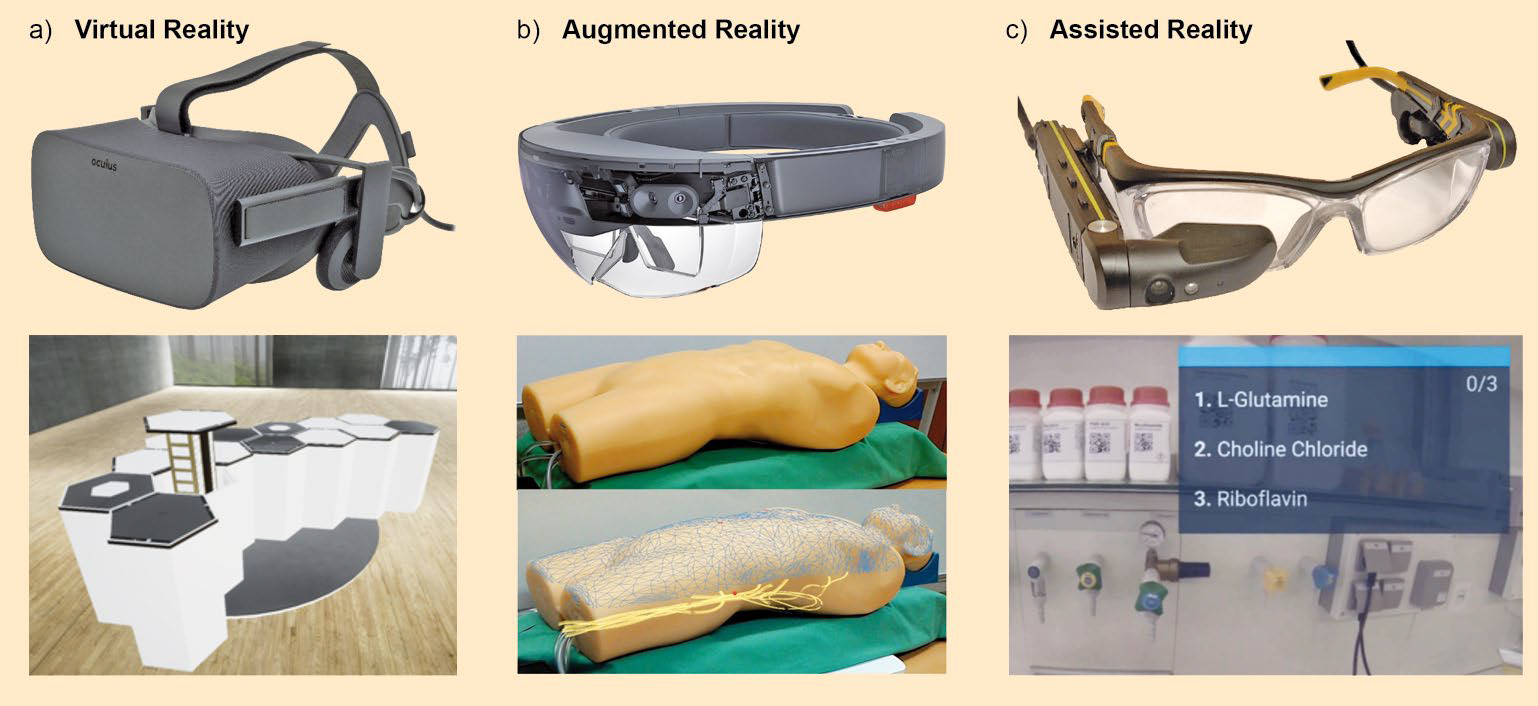

The transition between the real and virtual world keeps getting smoother, especially when using smart glasses and other head-mounted displays. There are different levels that describe the degree of virtualization being used. We propose a subdivision into three levels: virtual reality (VR), augmented reality (AR), and assisted reality (AsR) (see Fig. 1-1), because these terms provide a good way to delineate the different technologies on an application level.

|

|

Figure 1-1. Examples of glasses systems and representations of virtualization in virtual reality (VR), augmented reality (AR), and assisted reality (AsR). The representation corresponds to what a user of the glasses system would see. VR: Oculus Rift by Sony; virtual 3D rendering of a futuristic laboratory concept [I1]. AR: Hololens by Microsoft [I2] (CC BY-SA 4.0); positionally accurate overlay of blood vessels on a medical training dummy for simplification of medical applications (modified according to [I3]). AsR: M300 by Vuzix; overlay of components for the production of a medium. |

In virtual reality, a person puts on a VR headset that completely shields them from the real world. Only virtual content is displayed. VR applications are, thus, not suitable for direct use in a laboratory. However, they are very good for training, planning, and visualization, because their virtual world makes it possible to depict processes of any complexity without having to take into account spatial or physical limitations. This allows for virtual planning of laboratory equipment as well as testing of its functionality.

In augmented reality, there is an increase in reality. The real environment is supplemented with a digital layer provided by the AR glasses. In AR applications, the real world is overlayed with positionally accurate, virtual, 3D content. Ideally, this gives the impression of a fusion between the real and virtual elements.

AR applications have a great deal of potential in laboratory work, because they do a very good job at visual guidance of the user and can provide very good feedback on execution because of their 3D camera. Examples include the correct positioning of a container or a sample in a centrifuge.

The use of AR is currently hampered by two problems: AR glasses are relatively heavy and limit the field of vision, which makes wearing them for long periods very arduous. In addition, AR content is expensive to produce because it requires 3D models as well as supplemental models for interaction with the real environment.

Assisted reality is the overlay of information into the user’s field of vision. Most AsR glasses are monocular systems, providing information in only one eye. Possible applications include displaying media formulations or safety data about specific chemicals.

At this time, AsR applications are the most heavily tested applications for laboratory work. AsR glasses are lighter than AR glasses and barely affect the field of vision. In addition, AsR applications are relatively easy to develop.

These three levels are just a rough categorization for introductory purposes. There is a great deal of overlap between AR and AsR. For example, a simple text field can be superimposed on an AR application. Conversely, it is possible to show a live image from a camera in an AsR application, and to use color to highlight some elements, such as QR codes (See Fig. 1-2).

|

|

Figure 1-2. Screenshot of the ChemFinder app. In the ChemFinder app, a live image from the camera is shown and the image is simultaneously searched for QR codes. All of the QR codes that are found are read and checked for data such as the expiration date. QR codes from expired chemicals are overlayed with a red square in the live image, unexpired ones have a green square. |

References

[I1] Evan-Amos, Oculus-Rift-CV1-Headset-Front, wikimedia commons, 2021. (accessed May 3, 2021)

[I2] Ramadhanakbr, Ramahololens, wikimedia commons, 2016. (accessed May 3, 2021)

[I3] I. Kuhlemann et al., Towards X‐ray free endovascular interventions – using HoloLens for on‐line holographic visualisation, Healthc. Technol. Lett. 2017, 4, 184–187. https://doi.org/10.1049/htl.2017.0061

back to the main article |